For millions of YouTube content creators, a simple yellow dollar sign––signaling demonetization––portends the destruction of their entire career, and perhaps––if enough creators leave––the end of YouTube itself.

This issue of demonetization was taken to new heights after a recent shooting at YouTube’s headquarters in San Bruno, California, when it was revealed that the shooter had been angry over the limited amount of money she was making after the video sharing site had established new regulations on monetizable content. More broadly, this move toward the demonetization of controversial content, a move carried out via algorithms that substitute human editorial judgment with automated equations, calls into question the extent to which online companies such as YouTube can suppress content creators’ freedom of speech.

Advertisers started fleeing YouTube in 2017, when Hezbollah began posting videos promoting terrorism and when a popular YouTuber named Felix Kjellberg, who is better known as “PewDiePie,” made a satirical video in which he paid a man to hold a sign saying “Death to all Jews.” In response to backlash from advertisers, YouTube implemented a machine learning algorithm that alerts creators if their videos violate YouTube’s advertising policies in the form of a yellow demonetization icon. YouTube has increasingly been depending on this algorithm to automatically sort which of the billions of videos watched daily are appropriate for both advertisers and viewers.

More recently, starting February 20, YouTube began having employees review the site’s top videos and instituted a new policy requiring channels to have 1,000 subscribers and 4,000 hours of views before ads could be attached to their videos. YouTube currently utilizes an algorithm called deep learning artificial intelligence to recommend videos to users. This algorithm, separate from the algorithm which screens videos for advertiser unfriendly content, mirrors the human brain’s neural connections, enabling computers to process information on many layers.

This phenomenon of stringent, algorithmic demonetization has been dubbed “adpocalypse” by many YouTube creators, who feel that YouTube’s implementation of automated demonetization has rendered the prospect of making a living on the site far more difficult. YouTube considers topics like “sensitive social issues” or “tragedy and conflict” as “not advertiser friendly,” or NAF––topics so broadly defined that many creators have lost up to half their income, according to NYMag.

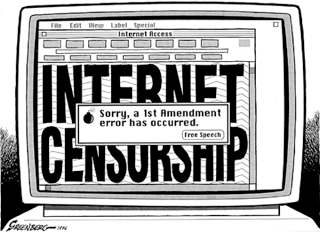

Equally important, YouTube’s demonetization policy raises issues surrounding free speech online. Prolific YouTube creator Casey Neistat, who posted a video in late 2017 lamenting YouTube’s new demonetization policies, believes freedom of speech is central to the backlash over demonetization. Neistat had previously posted a video drawing attention to a GoFundMe page he had created to raise money for the victims of the October 2017 Las Vegas shooting, with the intention of donating the profits from that video to those victims. However, Neistat’s video was demonetized, even as videos posted about the Las Vegas shooting on Jimmy Kimmel’s official YouTube channel were not. As such, YouTube’s prioritization of mainstream talk show hosts over lesser-known creators raises serious questions about the role of power relations on the site.

According Jeffrey Rosen, President of the National Constitution Center, private companies like YouTube––which are entitled to regulate speech on their platforms as they see fit––nonetheless threaten free speech because their ubiquity make them akin to public spaces.

“These digital platforms, as private corporations, are not formally restrained by the First Amendment,” Rosen said at a lecture given to Harvard’s Kennedy School of Government, adding that such platforms face significant public pressure “to favor values such as dignity and safety rather than liberty and free expression.”

In addition to the perils posed to the First Amendment by companies like YouTube, social media sites––such as YouTube––have increasingly become “masters of a new surveillance capitalism,” according to John Naughton, a Senior Research Fellow at Cambridge University’s Center for Research in the Arts, Social Sciences and Humanities. Naughton argues that advertisers are YouTube and Facebook’s primary customers, and that users are, in fact, “the product.” Further, Naughton contends that democracy itself can be subverted through the perversion and exploitation of social media technologies such as those provided to creators on YouTube.

“It is the extraordinary levels of user engagement that allows Google and Facebook to operate as an unprecedented kind of commercial entity,” Naughton said. “There are aspects of the companies influence that were apparently not foreseen by the firms themselves either, including the way that their advertising systems would pressure groups, parties, and governments to covertly target voters with precisely calibrated political messages.”

Additionally, in a New York Times op-ed, sociologist Zeynep Tufekci argued that YouTube’s algorithm for suggesting videos to viewers––a separate algorithm than the one used to determine monitizable content––functions as a means of subtly radicalizing viewers by providing more extreme content.

“It seems as if you are never ‘hard core’ enough for YouTube’s recommendation algorithm,” Tufekci said. “It promotes, recommends and disseminates videos in a manner that appears to constantly up the stakes. Given its billion or so users, YouTube may be one of the most powerful radicalizing instruments of the 21st century.”

Tufekci states YouTube’s algorithm exploits the innate human tendency to explore engaging content by progressively suggesting extremist content and subsequently profiting off the sale of advertisements at the user’s expense. Likening YouTube to a restaurant serving unhealthy food, Tufekci suggests that while YouTube claims to promote content that users want, the video platform enables the spread of videos that are filled with conspiracies, hate, and misinformation. These videos, as Tufekci argues, affect children and teenagers when they utilize Google or YouTube in school as a means of learning about new information, especially as Google’s Chromebooks, which come preloaded with YouTube, make up a substantial section of the education market in the U.S.

A Wall Street Journal article written by Jack Nicas also corroborates Tufekci analysis by claiming that YouTube’s recommendations, undergirded by their algorithm, promotes “conspiracy theories, partisan viewpoints, and misleading videos even when those users haven’t shown interests in such content” in an effort to keep people hooked to the software. Additionally, Nicas references that “when users show a political bias in what they choose to view, YouTube typically recommends videos that echo those biases, often with more-extreme viewpoints.”

YouTube’s creator academy videos, designed to explain YouTube’s algorithm to new creators, inadvertently validates both Tufekci and Nicas’s analysis in part by stating that one of the goals of the algorithm is “to get viewers to keep watching more of what they like”. While seemingly innocuous, YouTube’s desire to keep users perpetually captivated––even addicted to content––although good for their business model, has devastating consequences on people’s understanding of facts and promotes the dissemination of misinformation, conspiracies, and hate to a potentially uninformed populace.

Ultimately, as social media platforms increasingly become an integral––even foundational part of people’s lives and careers––companies like YouTube must learn to anticipate subversive activity and proactively work within the creator community to ensure all members are being treated fairly and equally while simultaneously upholding the First Amendment.

Featured Image Source: CASP.

Be First to Comment